AI Prompt Poisoning: Understanding the Threat – And Why You Need to Pay Attention

By Andrew Johnston | 5 March 2026

Summary

Generative AI is rapidly becoming part of everyday work, but many organisations are unprepared for the security risks it introduces. Techniques such as AI prompt poisoning and the casual sharing of sensitive information with AI tools can expose businesses to data leaks, operational disruption, and compliance challenges.

Understanding how these threats work and establishing clear policies for safe AI use is essential as organisations navigate this evolving technology landscape.

Here's the uncomfortable reality: 75% of business employees now use generative AI (The Alan Turing Institute, 2024), with 46% having adopted it within the past six months. Yet according to McKinsey, only 38% of organisations are taking active steps to mitigate AI-related risks.

Artificial intelligence is rapidly changing the way we work, and with that change comes a new set of security challenges. One of the most concerning is ‘AI prompt poisoning’ – a technique where someone intentionally manipulates the instructions given to an AI model to generate unwanted or harmful results.

Think of it like this: you’re asking a very smart, but potentially naive, assistant a question, and someone else is feeding it misleading information to guide the answer. It's not about a malicious robot uprising; it’s about subtly corrupting the AI model itself.

What is AI Prompt Poisoning?

Here is a definition: simply put, “Prompt Poisoning” involves the deliberate creation and crafting of prompts that are designed to trick an AI model into producing a specific or directed outcome. These outcomes are often biased, inaccurate, or deliberately harmful. It's not brute force, it's about exploiting vulnerabilities in the AI’s understanding of instructions.

How it Works (Simplified): “AI models, particularly Large Language Models (LLMs) like ChatGPT, are trained on massive amounts of data. When you give an AI a prompt, it tries to interpret your request and generate a response based on its training. Prompt poisoning exploits this by inserting misleading cues, biased language, or even fabricated information into the prompt.

Example: Asking an AI chatbot to “Write a marketing email promoting a new product.” A prompt poisoned with phrases like ‘emphasise the product’s weaknesses could result in a misleading and damaging email being generated.

Real-world case: In February 2025, security researcher Johann Rehberger demonstrated how Google's Gemini Advanced could be tricked into storing false data in long-term memory. He uploaded a document with hidden prompts instructing Gemini to store fake details about him (that he's a 102-year-old flat-earther who lives in the Matrix) when he typed trigger words like "yes" or "no". The result? Planted memories that continuously influenced Gemini's responses – potentially across weeks or months of future conversations. This damages confidence in AI and the impact on traditional security tools that look for immediate threats. Delayed-activation attacks “Trojans” bypass this by separating the injection from the execution.

The Attack Surface Is Vast

Security research shows that modern AI systems are vulnerable across multiple attack surfaces where AI and Chatbots are used for data collection, examples are:

- Web content: Public websites, forums, Wikipedia pages that AI systems browse

- Email content: Messages processed by AI assistants

- Documents: PDFs, Word docs, spreadsheets in RAG systems

- Code repositories: Libraries and templates in coding environments

- Memory stores: Persistent context in AI systems with memory features

- Browser tabs: Crosstab context in AI with browser integration

Prompt injections are a sophisticated attack and require crafting and knowledge of how to inject the prompts and the rogue data into the AI or Chatbot. While prompt injection is a key threat, there is a more insidious that is more common and arguably more dangerous. The threat facing businesses is simpler where well-meaning employees copy and paste sensitive information into AI systems without understanding the consequences.

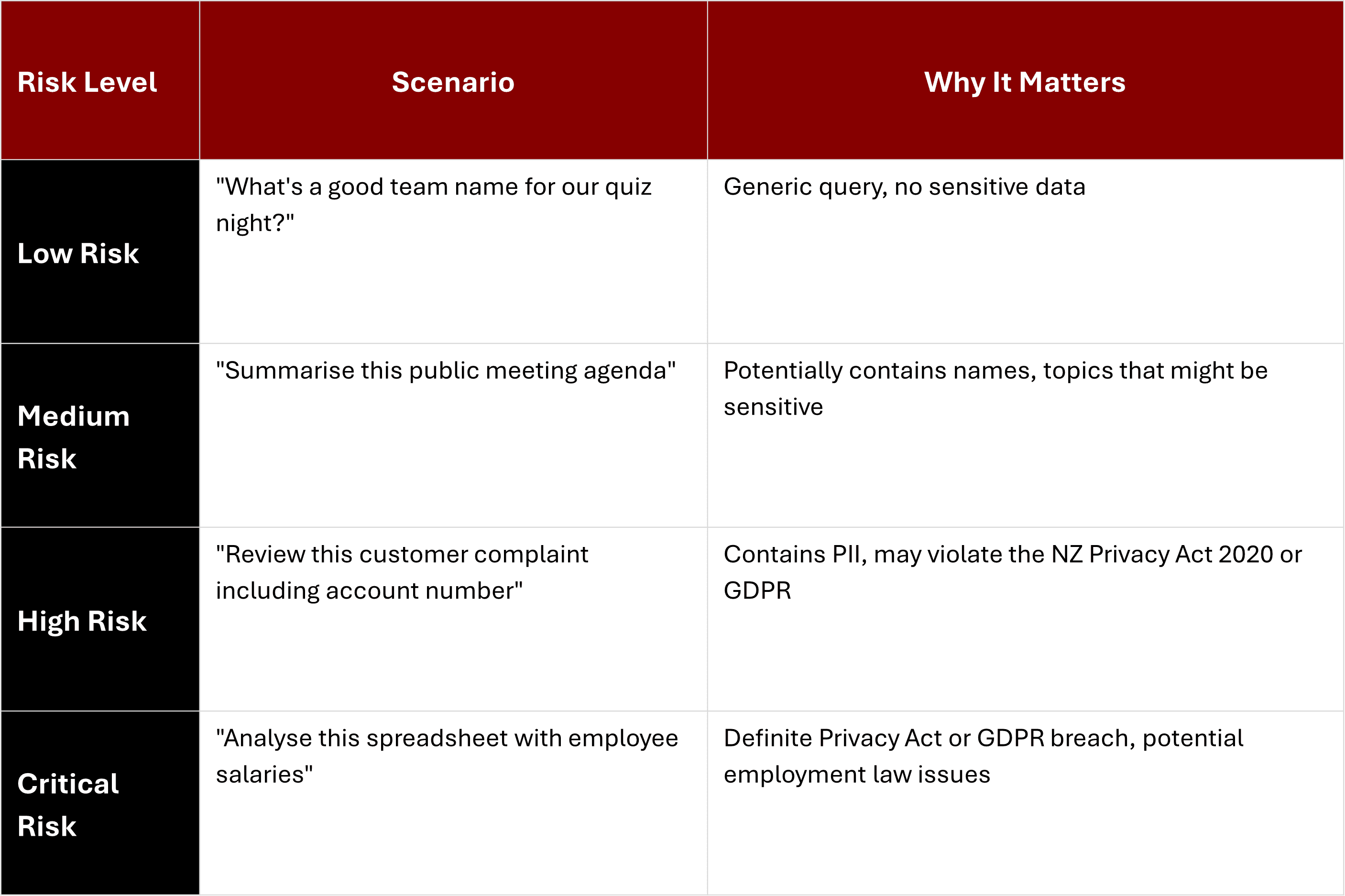

Not all copy-paste scenarios are created equally; here are the are some examples and the potential risks that a simple function.

If an organisation reviewed its AI usage they would find that they have employees operating across this entire spectrum right now, with no policy, no monitoring, and no awareness of the risks.

What Actually Happens to Your Data?

When you paste information into an AI system, several things can happen to your data as it is processed by the AI.

Training Data Harvesting: By default, most free AI services can use your conversations to improve their models. That means:

- Your proprietary business strategies might train future versions

- Confidential client information could emerge in other users' conversations

- Personal data might become part of the model's knowledge base

Important caveat: Once data is used for training, it's functionally impossible to remove. As one privacy analysis notes: "Even if you delete your chat history, once information has been used to train ChatGPT, Gemini, or Claude, it remains embedded in the model and cannot be fully removed".

Why is it a Problem?

.jpg&w=3840&q=75&dpl=dpl_HeHi2xhshmxJvwJSZ8aegwKTepGi)

Modern cyberattacks often target the weakest link in a supply chain. Your organisation might not be the ultimate target, but your data could provide:

- Access credentials to larger partners

- Information about critical infrastructure you support

- Personal data that can be aggregated with other breaches

- Templates and processes that reveal vulnerabilities in entire sectors

Consider if a single person pasting one document into an AI might seem low risk, but across an organisation the accumulation effect is caused where you see:

- 20 staff members each making "just one quick check" = 20 potential exposure points

- Multiple AI platforms (ChatGPT, Gemini, Claude, Copilot) = multiplied risk

- Months or years of accumulated queries = substantial data footprint

The impact to the organistion due to unmanaged use of AI has the potential to impact the organistion are:

- Reputational Damage: A manipulated AI could generate false information that harms a company’s reputation.

- Operational Risks: AI systems are increasingly used for decision-making. If those systems are influenced by poisoned prompts, the decisions they make could be flawed.

- The Unknown: The true extent of the risk is still being understood. As AI models become more sophisticated and integrated into more systems, the potential for damage grows.

- Compliance Issues: Many industries are subject to strict regulations. AI prompt poisoning could easily lead to non-compliance.

What You Can Do

One of the most important things that needs to happen to provide protection against AI impacting the business is to start. Start the conversations with the organisations, IT manager, Privacy Officer, Risk, Compliance and senior leadership. Even if you don't have answers yet, acknowledging the questions progresses understanding the impact of AI.

Audit AI usage, and this depends on the size of the organisation, can be from a open and frank team stand up though to a anonymous survey asking which AI tools people use and what kind of information they've put into them. The results might surprise you.

Create a holding pattern where a starting set of rules are published to the organisation while a proper policy is established. Make the rules simple like “Don't paste anything into AI that you wouldn't post on the company Facebook or LinkedIn pages”. This keeps it simple and understandable on all levels.

Acknowledge that this risk exists. Don’t dismiss it as a theoretical problem. Start educating your teams about the potential for prompt poisoning.

Define “Prompt Engineering Best Practices and Templates providing carefully designed prompt examples that provide specific instructions that avoid leading language and defines the desired output.

Test and monitor AI outputs where testing and monitoring responses generated by AI models where you for inconsistencies, biases, or unexpected behavior.

Where the organisation has application access controls restrict access to AI models and the ability to modify prompts. Please note that a AI can run a USB stick with the correct setup.

Read more and stay informed as AI is a rapidly evolving area. Follow security research and updates to stay ahead of the threat.

Conclusion

AI usage increases the businesses over all threat surface, and it must be acknowledged by the organisation both copy paste and prompt poisoning are a serious and emerging security concern. While the full extent of the risk is still being understood, it’s vital to take proactive steps to mitigate the potential damage.

About Liverton Security

We're a New Zealand and UK based cybersecurity consultancy specialising in helping local government, SMBs, and critical infrastructure providers navigate modern security challenges – including AI security risks. Our services include security maturity assessments, NZISM-PSR compliance consulting, and practical security training and are dedicated to helping businesses navigate this complex landscape, providing expert security assessments, guidance on prompt engineering, and ongoing monitoring to protect your critical AI systems.

Let’s talk about securing your AI future and why it’s crucial to start learning about it now.

We can keep you cyber safe

To explore solutions and discuss your cybersecurity needs, talk to our team at Liverton Security.

Let's Chat