Vibe Coding: The Hidden Security Risks of AI-Generated Software

By Andrew Johnston | 9 March 2026

Summary

Artificial intelligence is making software development more accessible than ever, allowing users to generate code simply by describing what they want. This emerging practice, often called “vibe coding,” promises faster development and greater accessibility. However, without proper requirements, testing, and security awareness, AI-generated software can introduce serious vulnerabilities. Understanding the risks behind AI-assisted development is essential before deploying code into production environments.

Artificial intelligence is changing the way software gets built and deployed, it is not always in a way we expect.

There is a growing trend called "vibe coding" that is reshaping how businesses and individuals approach software development. The term, coined by AI researcher Andrej Karpathy earlier last year, describes the practice of using AI tools to generate code simply by describing what you want in plain language — no deep programming knowledge required. You type a prompt, the AI writes the code, and you publish or deploy it. In a lot of ways this is a further reflection of the “script kiddies” a term from the 1990’s where someone with a lack of technical expertise or understanding can cause damage both intentionally, or out of curiosity.

On the surface vibe coding, sounds like a productivity revolution. For small businesses especially, the ability to build internal tools, automate workflows, or create simple applications without hiring a developer is genuinely appealing. And in many cases, AI-generated code works perfectly well.

But there's a side of vibe coding that isn't getting nearly enough attention, the security implications. Here are some examples of what should be considered before embarking on this model.

The Trust Problem

When you ask an AI to write code for you, you are trusting that what comes back is safe, secure, and fit for purpose. That trust may be misplaced.

Not all AI coding assistants are created equal, and the services behind them vary widely in quality, transparency, and intent. Some AI tools, particularly lesser-known or free services have been found to introduce subtle vulnerabilities into generated code, whether through poor training data, outdated libraries, or in more concerning cases, deliberate manipulation. Researchers have demonstrated that AI models can be influenced to produce code that looks functional but contains backdoors, insecure configurations, or logic that could be exploited later.

🤖 If you do not have the technical knowledge to review what the AI has written, you have no way of knowing whether what you are deploying is safe as attackers are increasingly aware of and actively targeting.

Requirements are the Guide

Knowing what you want to build is an important part of the development life cycle.

- How many organisations started with a Spreadsheet or a simple database like MS Access?

- Were the reasons for their development understood at the time?

- Are they still understood today?

Even if the original requirements have evaporated in the sands of time, you still need to understand what you are going to develop and what you need to test.

Remember you need to look at the functional side (what it does) as well as the non-functional side (how it works). On the surface these may appear the same, but the non-functional side defines the key considerations of performance, security, maintenance and scalability. Do not skip understanding what you are building and the reasons for it. This is more than just writing a prompt saying “I want you to build a program to do [this]”. Understanding the why, the how, the process flows, security and the checks and balances is important and will allow for an overall improvement of what is built and it will provide a guide to the next part that is often misunderstood or not carried out.

The Testing Gap

Even when AI-generated code is written in good faith, it still needs to be tested and tested properly, hence the need to understand what is being delivered by documenting requirements.

Many people using vibe coding tools are not developers and have no background in software quality assurance or security testing. They see code that appears to work and assume that is sufficient. It rarely is. How much testing is undertaken? Yes, it completes a function but have any long-term requirements been considered?

Secure software development involves multiple layers of review: static code analysis to identify vulnerabilities before the code runs, dynamic testing to find issues in a live environment, dependency checking to ensure third-party libraries are not carrying known vulnerabilities, and penetration testing to validate that an application holds up under real-world attack conditions. Skipping these steps, which is common when non-technical users are generating and deploying code, leaves significant gaps that can be exploited.

The GitHub Blindspot

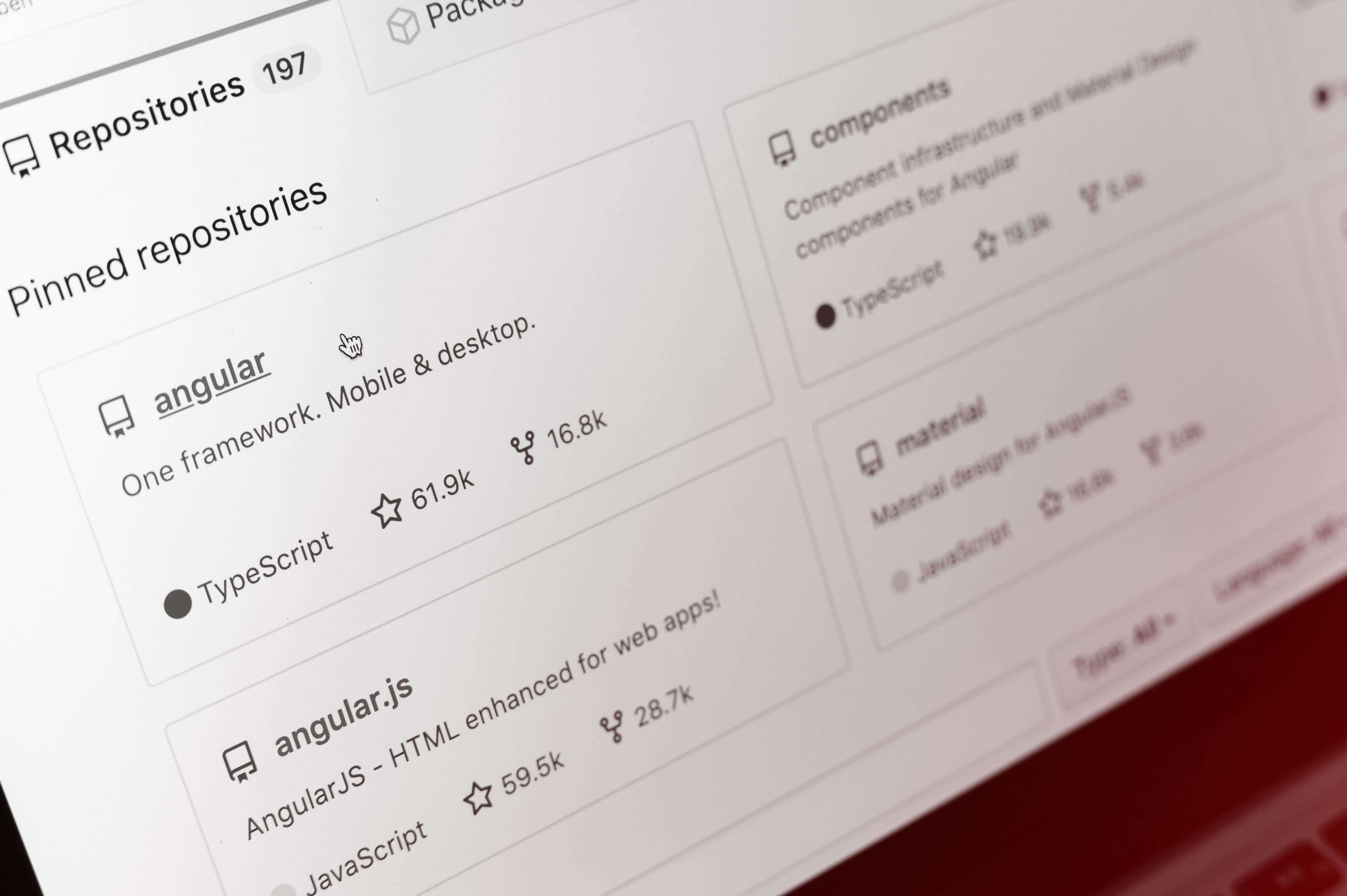

One of the most common mistakes we are seeing is people publishing AI-generated code to GitHub without understanding a fundamental point: the default GitHub repository model is public.

This means that any code you push to a public repository, that includes configuration files, API keys, database credentials, internal URLs, and environment variables is immediately visible/exposed to anyone on the internet. Automated bots scan GitHub continuously, harvesting exposed secrets within minutes of them being published.

We have seen this lead to compromised cloud accounts, data breaches, and significant remediation costs for businesses that simply did not know what they were doing when they hit "publish." The most infamous example is the exploitation of an AI agent repository when there were changes in its naming from ClawBot, to MoltBot to OpenClaw, there are still security organisations and professionals studying this and it will be interesting to see the PHD thesis that will come out of this.

🤖 If you are using GitHub to share or store code, understanding the difference between public and private repositories is not optional, it is essential.

What This Means for Your Business

Vibe coding is not going away. AI-assisted development will become more common, not less, and there are real productivity benefits to be had. But productivity without security awareness is a liability.

Before your team or anyone in your organisation starts generating and deploying AI-written code, it is worth asking a few basic questions:

- Do you know where that code is going to live?

- Do you understand what data it will touch?

- Has anyone with a security background reviewed it?

- Do you know what testing has been done?

🤖 If the answer to any of those questions is "no" or "I'm not sure," that is a conversation to have before the code goes anywhere near a production environment.

About Liverton Security

At Liverton Security, we work with businesses across the world to help them navigate exactly these kinds of emerging risks, bridging the gap between the speed of modern technology adoption and the security practices that protect your people, your data, and your reputation.

The productivity gains from AI are real. So are the risks. The difference is knowing which side of the line you are standing on.

🤖 Regularly working with AI? Talk to our team about safe AI practices and solutions.

We can keep you cyber safe

To explore solutions and discuss your cybersecurity needs, talk to our team at Liverton Security.

Let's Chat